Critical Metascience Roundup

Update #2

Welcome to Update #2, which summarizes work on:

Barriers and enablers of reproducibility

Cataloguing open research practices in the humanities and social sciences

Reconsidering reproducibility and questionable research practices in animal-based biomedicine

Naming individual studies in metascience work

Barriers and Enablers of Reproducibility

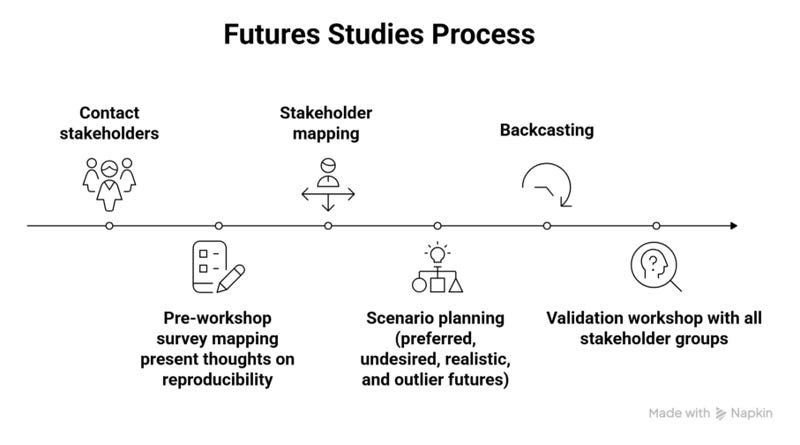

Serge Horbach, Tony Ross-Hellauer and colleagues published the results of a “future studies” analysis of reproducibility. Their 19 research participants included representatives of scholarly publishers, funding agencies, qualitative social scientists, and machine learning researchers.

The results indicated different views about making reproducibility a requirement:

Some participants forcefully argued that the only way to achieve higher levels of reproducibility was by mandating efforts to foster it, for example, through an explicit requirement in grant funding, journal publication or tenure processes. Others, however, were much more skeptical, arguing for the need to remain flexible. This skeptical view included maintaining the option to opt-out of reproducibility efforts and standards, in cases where these are not deemed relevant or feasible. This finding supports recent trends in the literature, which frame reproducibility (and replicability) as neither universally applicable nor feasible across diverse epistemic contexts (Cole et al., 2024; Leonelli, 2018; Penders et al., 2019; Ulpts & Schneider, 2023). Consequently, we recommend the development of guidelines for reproducibility (and/or transparency) practices tailored to specific domain, methodological and epistemic contexts.

Horbach, S. P., Cole, N. L., Kopeinik, S., Leitner, B., Ross-Hellauer, T., & Tijdink, J. (2026). How to get there from here? Barriers and enablers on the road towards reproducibility in research. Journal of Trial and Error, 1(1), 1-33. https://doi.org/10.36850/f4c4-4a38

Launch of MORPHSS Catalogue

Samuel Moore, Miranda Lynn Barnes and colleagues launched the Materialising Open Research Practices in the Humanities and Social Sciences (MORPHSS) catalogue: https://catalogue.morphss.work/

This is a living, iterative, and collaborative showcase of open research practices within the arts, humanities, and social sciences disciplines.

For further information, please see the accompanying report by Jenni Adams et al.:

We identify 30 open research practices in AHSS, among which we observe six key forms of openness: process openness, evidentiary openness, availability of outputs, the accessible communication of research, participatory openness and epistemic openness. Based on those identified, we find that open research practices in AHSS are diverse, extending beyond the suite of practices emphasised within dominant accounts of Open Science.

Adams, J., Barnes, M., Moore, S., Pinfield, S., & Soni, S. (2026). Openness in the arts, humanities and social sciences: Documenting open research practices beyond STEM (A MORPHSS Project Report) (p. 51). MORPHSS Project. https://doi.org/10.17613/h10rz-qk035

Reconsidering Reproducibility and Questionable Research Practices in Animal-Based Biomedicine

Simon Lohse engages with critical metascientific work as part of his discussion of reproducibility and questionable research practices in the area of animal-based biomedicine. He argues that mainstream metascientific treatments of reproducibility need refining in this area:

Critical analyses of meta-science emphasise the concept of epistemic diversity and question the extent to which general guidelines for improving replicability or validity can do justice to the heterogeneity of scientific practices and subject areas (esp. Leonelli, 2018, 2022). One of the main aims of this paper is thus to help shift the focus of the meta-scientific replication debate in animal-based research, so that it better reflects these aspects and concerns.

Lohse also calls for a more discipline- and context-specific consideration of questionable research practices:

In many cases, it will be highly context-dependent which methodological decisions are problematic – as their soundness depends on research aim, (evolving) local practices, specific challenges etc. (Fiedler & Schwarz, 2016; Sacco et al., 2019). As I have attempted to show, the assessment of a specific decision or procedure as problematic may, at times, also depend on ethical considerations. This conclusion casts further doubt on the usefulness of developing guidelines for good scientific practice with strongly universalistic tendencies, including those aimed at large scientific fields, such as medicine and psychology. Rather than relying pre-dominantly on such guidelines and a “top down approach” (Fletcher, 2021), we should pay more attention to local methodological norms engrained in specific epistemic practices and cultures; norms about which we do not know nearly enough, indicating the need for more qualitative studies informed by a philosophy of science/STS perspective.

Lohse, S. (2026). Reproducibility, questionable research practices and ethico-epistemic trade-offs in animal-based biomedicine. European Journal for Philosophy of Science, 16, 25. https://doi.org/10.1007/s13194-026-00727-y

Naming Individual Studies in Metascience Work

Kai Sassenberg and colleagues respond to Malte Elson et al.’s (2026) recent proposal that, “as a default, meta-scientific studies of published research artefacts need to include…a full, identifiable list of included studies.” In response, Sassenberg et al. argue that “publishing non-anonymized data might pose unjustified harm to original authors.” Part of this disagreement stems from different conceptualizations of the tasks and limits of metascience. As Sassenberg et al. explained:

The point on which we most strongly disagree with Elson et al. (2026) is their apparent understanding of metascience. Their description of the field implies that metascience is mainly about identifying flaws, errors, inconsistencies, and deviations, as epitomized in the very first sentences of their contribution: “Meta-science is the study of quality, bias, and efficiency in research. To this end, metascience often examines and analyses research artefacts from existing studies – such as published articles, preregistration documents, analysis code, and datasets” (p. 1). This suggests that metascience is basically a monitoring activity, by which researchers who know what is right and wrong (i.e., the correct way of “doing science”) evaluate original research and flag it. According to Elson et al. (2026), metascience is about appraising “..., for example, how complete preregistrations are, how often key reporting elements are missing, or how frequently analytic flexibility appears” (p. 2). This understanding of metascience conveys the view that it is objectively discernible what is right or wrong. We do not agree with this view. There is, of course, a blacklist of scientific practices that are clearly unethical (e.g., fabricating data, making research participants identifiable without explicit consent, plagiarizing ideas, etc.), but there is a much larger area of practices that are not scientifically questionable per se – at least not without knowing the specific underlying intentions (e.g., deciding to remove single cases in a dataset, deviating from a preregistration, etc.).

Elson, M., Hussey, I., Clarke, B., Norwood, S. F., Grinschgl, S., Arslan, R. C., … Cummins, J. (2026, February 13). Against anonymising meta-scientific data. PsyArXiv. https://doi.org/10.31234/osf.io/6eyjf_v1

Sassenberg, K., Hahn, L., Hellmann, J. H., & Gollwitzer, M. (2026, March 19). The perils of non-anonymized data in metascience. PsyArXiv. https://doi.org/10.31234/osf.io/zj8bk_v1